Qwen 3.5 Running Locally on iPhone 17 Pro

0

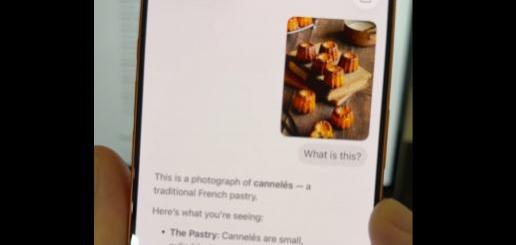

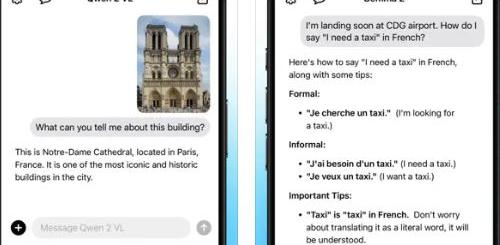

Many people assume that you need super advanced hardware to run powerful AI models. In reality, you will just need the phone and the right version of your favorite models. Locally AI is already capable of running Llama, Gemma, and Qwen on your iPhone thanks to Apple MLX. It lets you use AI offline to preserve your privacy. Adrien Grondin has shared a video that shows Qwen 3.5 running on an iPhone 17 Pro. This particular one is a 2B 6-bit model with MLX optimized for Apple Silicon.

[where to get it]The new Qwen 3.5 by @Alibaba_Qwen running on-device on iPhone 17 Pro.

Qwen 3.5 beats models 4 times its size, has strong visual understanding, and can toggle reasoning on or off.

The 2B 6-bit model here is running with MLX optimized for Apple Silicon. pic.twitter.com/GsGGzur0og

— Adrien Grondin (@adrgrondin) March 2, 2026